|

GitHub is home to over 36 million developers working together to host and review code, manage projects, and build software together.

Join GitHub todaySign up

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHubâ€, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Pdq deploy install with wsus. Already on GitHub? Sign in to your account

Commentscommented Jan 19, 2017

commented Jan 19, 2017

commented Jan 19, 2017

referenced this issue Jan 19, 2017ClosedNullReferenceException #1217commented Jan 20, 2017

added this to the milestone Feb 2, 2017added the Bug label Feb 2, 2017referenced this issue Feb 2, 2017ClosedSystem.NullReferenceException: Object reference not set to an instance of an object #1454Null Reference Exceptioncommented Feb 2, 2017

commented Feb 22, 2017

commented Feb 22, 2017

commented Feb 22, 2017

commented Feb 23, 2017

referenced this issue Feb 23, 2017ClosedProject encountered an error dialog displayed after building #1608referenced this issue Mar 1, 2017Mergedguard us from caller that give us missing data such as error id or fi… #17504commented Mar 18, 2017

commented Mar 18, 2017

commented Mar 18, 2017

referenced this issue May 3, 2017ClosedNRE in Microsoft.VisualStudio.ProjectSystem.VS.Build.LanguageServiceErrorListProvider.<AddMessageCoreAsync>d__9.MoveNext() #2110referenced this issue Jul 24, 2017ClosedSystem.NullReferenceException: Object reference not set to an instance of an object in ProjectExternalErrorReporter.ReportError2 #2622

Sign up for freeto join this conversation on GitHub. Already have an account? Sign in to comment

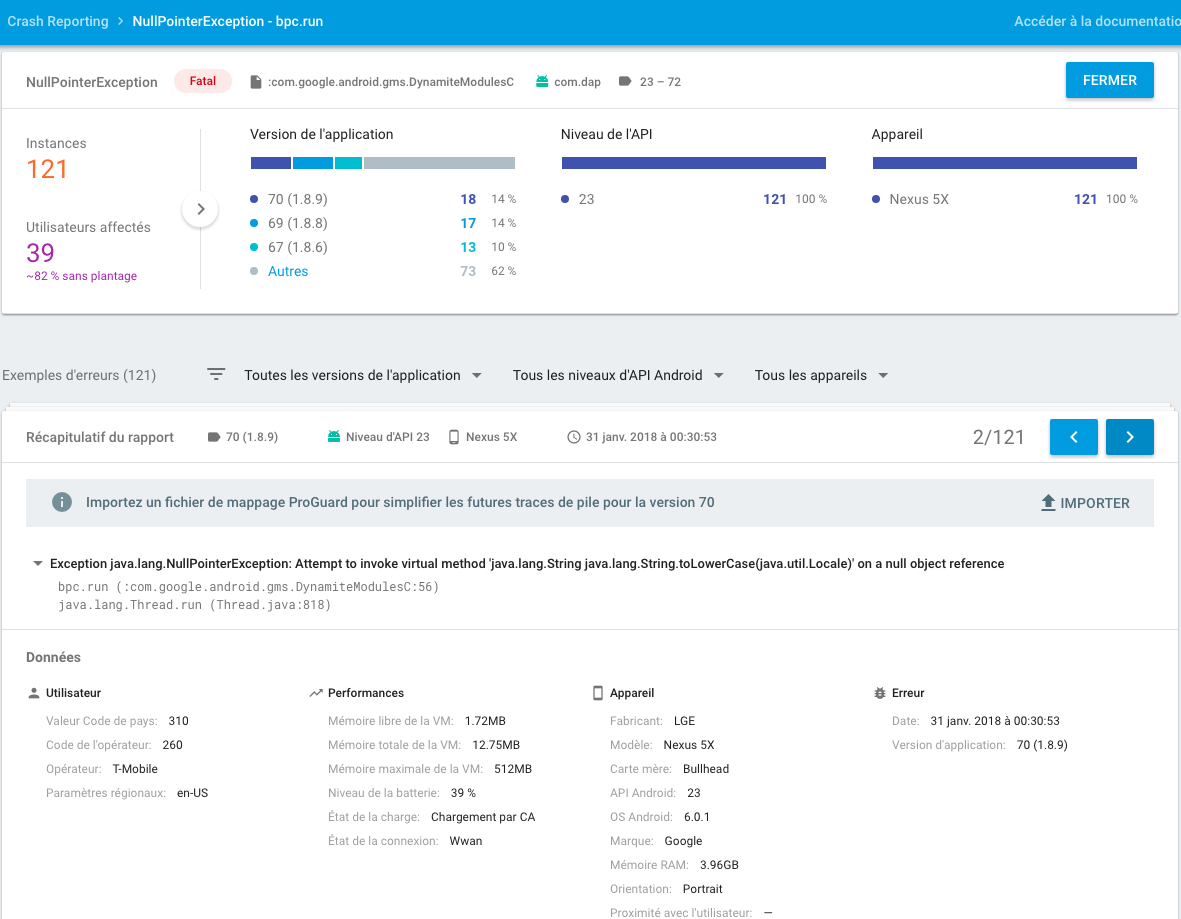

We're seeing an issue where activation collection on a silo seems to either stop or to take a very long time. The total activation count begins to increase at a steady rate while the recently used activation count stays steady. Eventually this leads to the server running out of memory and crashing. In the histogram below you can see logs for the 'Before collection', 'Starting DestroyActivations', and 'After collection' events. Typically they occur in quick successesion, but it seams that something happens that suddenly makes the process take a very long time. Using the IManagementGrain interface to trigger a manual activation does seem to go quickly (but the timespan used in that case may not match the default lifetime of the grain). We've only seen this in our production environment, we haven't been able to reproduce it in a test scenario. It does seem to happen more frequently in high traffic scenarios.

When the collections start to take a long time we also start to see an exception happen about once a minute:

Caught and ignored exception: System.NullReferenceException with mesagge: Object reference not set to an instance of an object. thrown from timer callback GrainTimer.Catalog.GCTimer. TimerCallbackHandler:[SystemTarget: S:235355348Catalog@S0000000e]->System.Threading.Tasks.Task OnTimer(System.Object)

at Orleans.Runtime.ActivationCollector.Bucket.CancelAll()

at Orleans.Runtime.ActivationCollector.DequeueQuantum(IEnumerable`1& items, DateTime now) at Orleans.Runtime.ActivationCollector.ScanStale() at Orleans.Runtime.Catalog.d__45.MoveNext() --- End of stack trace from previous location where exception was thrown --- at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task) at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task) at Orleans.Runtime.GrainTimer.d__20.MoveNext()

It looks like the 'CancellAll' call in Bucket doesn't check to see if the ActivationData object is null. I assume that there is a concurrent call to Bucket.TryRemove, which is nulling out the ActivationData object in the dictionary.

Two notable things about our setup. First, we do have a very short lifetime on some of our grains. The grain state can be large, so we want to remove it from memory quickly. Originally we had it set to 15 minutes, then five, and eventually one minute. I'm not sure if the above problem occurs more frequently with lower lifetimes. Second, our grains are currenly sending very large messages in many cases, sometimes 1 mb or more. We also get warning messages that activation turns are taking too long, though the warning is usually between 500 and 1000 milliseconds.

A process dump of one of the servers in a bad state showed two worker threads actively processing messages, and the rest waiting on BlockingCollection<>s (we don't use those in our code so I assume that is the AsynchAgent queue). The CPU averages around 50%.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Home

- New Page

- New Page

- About Me

- Blog

- Download Buku Bahasa Arab Gontor

- Batman Arkham Knight Update Download

- Fallout 3 Coop Mod

- Go To Settings To Activate Windows

- Kicking Back Taxman Devotion Blog

- Heart Of Iron 3 Torrent

- Terraria Pc Download Gratis

- Skype Not Working In Windows 10

- Microsoft Office 365 Crack Fr

- Ultimate Zip Cracker 7.3.2.4

- Sakamoto Fuyumi Best Mp3 Download

- Robbins Basic Pathology Pdf

- Planet Cnc Registration Key

- Gta V Vehicle Installer

- Contact

- Fallout New Vegas How To Favorite Items

- Civilization 6 Rule 34

- Elena Final Fantasy 7

- Pokemon Alpha Sapphire Mods

- Vce Exam Simulator Free

- Dawn Of War Hd

- Unity 300 Rear View

RSS Feed

RSS Feed